According to a study by Gartner, by 2026, more than 80% of search engine result pages will include generative AI summaries powered by large language models.

As AI-driven search engines and answer engines become a part of everyday search behavior, understanding how LLMs choose content is no longer optional. It’s a practical skill for anyone creating content that wants visibility beyond traditional rankings.

Large language models don’t scan the web the same way classic search tools do. They don’t simply list links. Instead, they interpret, weigh, and assemble information into a single response. That shift changes how content should be drafted, arranged, and managed.

In this blog post, we’ll talk about:

- How LLMs choose content when forming a final answer

- Why do some pages influence AI summaries more than others

- How to optimize content for AI answers

- How to adapt your content without sounding robotic

What Are Large Language Models and How Do They Work?

Large language models are AI systems trained to understand and generate text one word at a time. Tools such as ChatGPT, Gemini, and Perplexity are common examples of how large language models now interact with users through conversational answers rather than lists of links.

At their core, these language models work by predicting the next token, a word or part of a word, based on context. They rely on a probability distribution, choosing each next word by calculating which option best fits what came before.

Where LLMs Learn From?

LLMs learn patterns from vast training data, which includes public text, licensed sources, and human-created examples. This doesn’t mean they store websites or recall exact articles. Instead, they absorb language patterns, factual relationships, and ways ideas connect.

When users ask a question, the model doesn’t “search” in real time like traditional search engines. It generates an answer based on learned patterns, context, and when available live retrieval systems such as retrieval augmented generation.

This distinction matters because it directly affects how LLMs choose content when forming responses.

Difference Between Search Engines and LLMs

Traditional search engines and LLMs aim to help users find information, but they take very different paths to get there.

A search engine ranks pages based on signals like relevance, links, and site authority. The output is a list of results. The user decides what to read.

LLMs, on the other hand, produce a final answer. They blend information from multiple sources, compress ideas into fewer tokens, and present a single explanation.

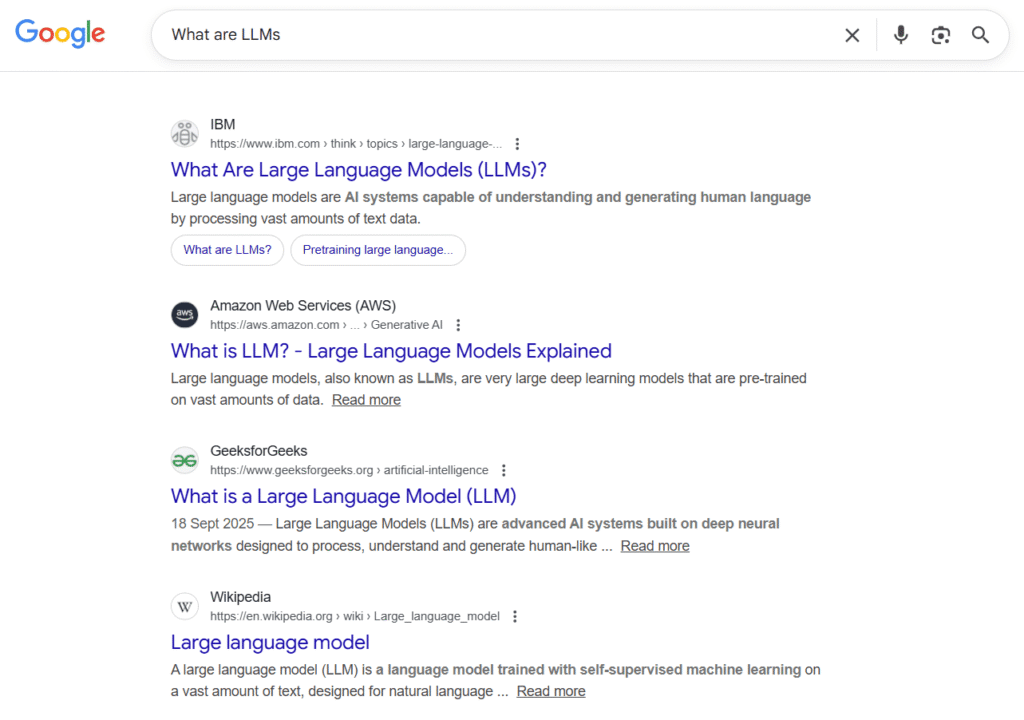

Here’s an example of what LLMs are in search engines:

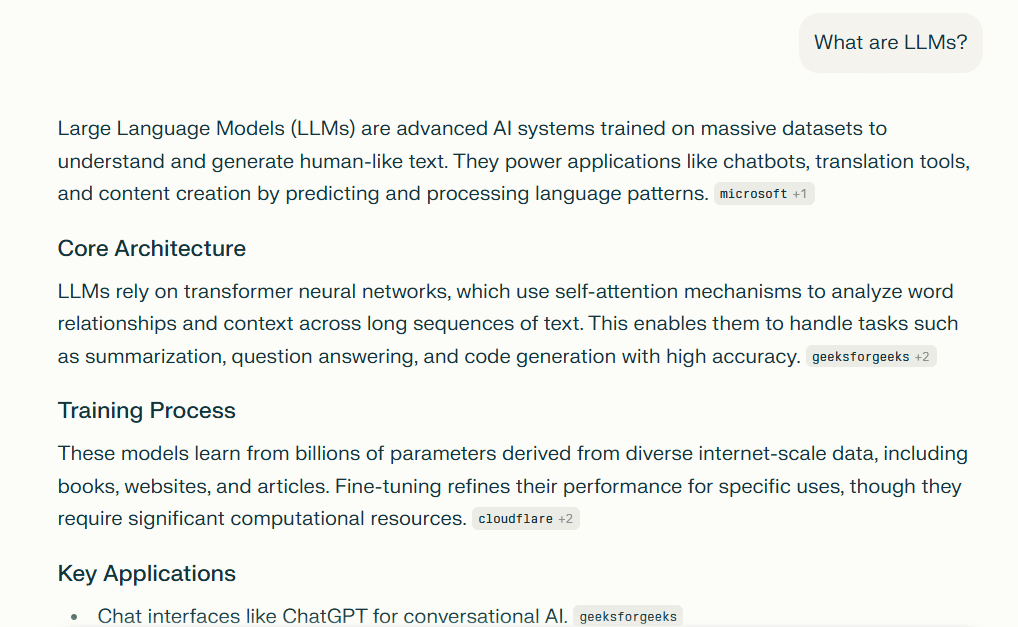

To clear your doubt, let’s look at one more example of what are LLMs on Perplexity:

Why Content Structure Changes for LLMs

Because LLMs generate answers rather than ranking pages, content needs to be:

- Easier to interpret without scanning the full page

- Clear in intent and focused on one idea at a time

- Structured so AI tools can extract relevant information

This is where many sites struggle. Content written only for traditional search often ends up in the wrong part of an AI response or skipped altogether.

How Large Language Models Choose Content to Form a Final Answer

Let’s get to the core question: How LLMs Choose Content when responding to a query.

LLMs don’t rely on a single page or a single site. Instead, they evaluate patterns across many sources, weigh relevance, and assemble an answer that fits the user’s intent.

This shift isn’t theoretical. Major AI and search platforms have publicly explained how answer generation now works. Google has stated that AI overviews summarize information directly within search results, while OpenAI notes that LLMs synthesize insights from many sources instead of pulling content from a single page.

As a result, visibility today depends on how well content fits into AI-generated answers, not just where it ranks.

At a high level, LLM content selection involves:

- Understanding the query and its context

- Identifying relevant information patterns

- Ranking likely answer components by usefulness

- Generating a coherent response with whole words and clear meaning

How LLM Content Selection Works?

When a user enters a query, the model evaluates context, intent, and nuance. It doesn’t just look for keyword matches. It looks for semantic understanding, what the question is really asking.

This process explains why focused paragraphs and clear headings matter. When one idea is buried inside a long block of text, the model may miss it or misinterpret it.

What Is the Role of Training Data?

Training data teaches the model how concepts relate to each other. It shapes how LLMs decide what sounds correct, helpful, and complete.

However, this alone isn’t enough for current AI search experiences. That’s why many AI engines combine models with live data sources, structured outputs, and search agents that retrieve up-to-date content.

Together, these systems influence how LLMs choose content in real-world answers.

Let’s understand this with an infographic:

How LLMs Choose Sources

LLMs don’t rely on only one website. Instead, they combine patterns from many trusted sources to form a response.

When people ask how LLMs choose sources, the key thing to understand is this: models don’t browse like humans. They don’t “click” links. They synthesize.

That synthesis is shaped by:

- Domain authority signals

- Consistency across multiple sources

- Clear explanations written in plain English

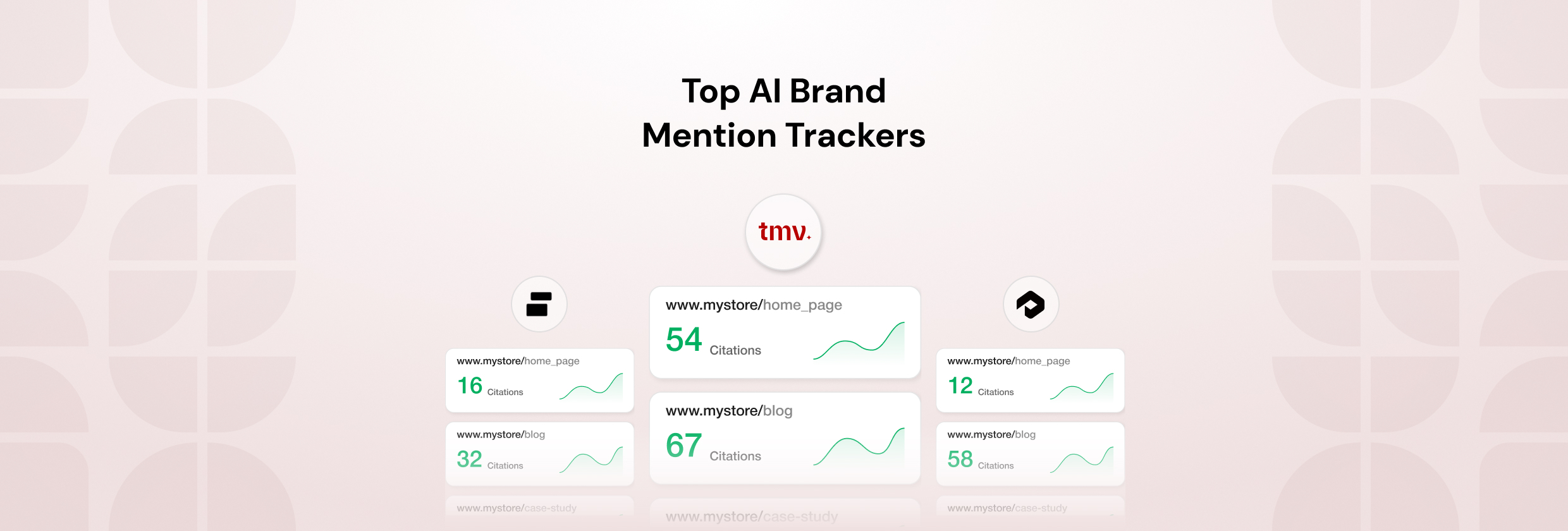

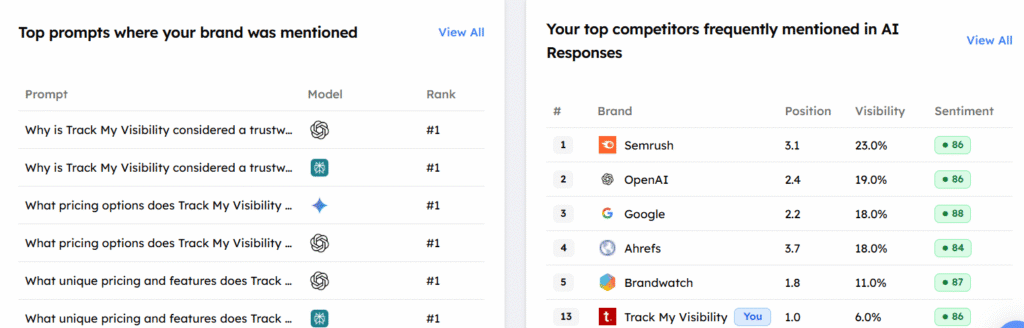

Tools like Track My Visibility help site owners understand whether their content appears in AI results, even when no direct click happens.

What Makes the Source Valuable for LLMs

A valuable source is one that explains concepts clearly, avoids confusion, and sticks to one idea per section. LLMs favor content that reduces interpretation effort.

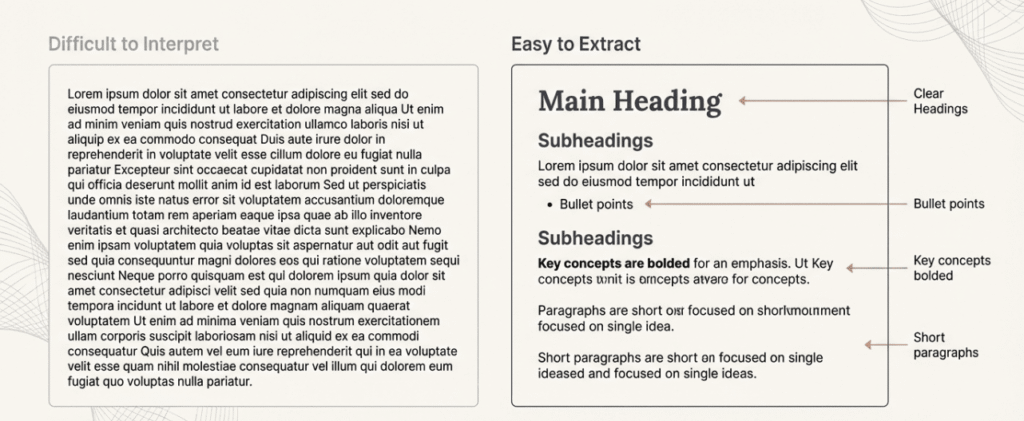

For example, a page with clear headings, bullet points, and direct explanations is easier for AI comprehension than a long narrative with shifting topics.

Why Few Websites Are Referenced More Often

Some sites appear repeatedly in summaries because they:

- Publish consistent, educational content

- Use structured data and schema markup correctly

- Maintain clarity across multiple related topics

This repetition teaches AI systems which sources are reliable when assembling answers.

Tools like Track My Visibility make this behavior visible by showing which brands are consistently mentioned across AI responses for related prompts.

Signals LLMs Use to Trust Content

Trust plays a major role in how LLMs choose content. AI trust signals help models decide which information feels safe to include.

These signals fall into several categories.

1. Content Quality Signals

High-quality content focuses on relevance and clarity. It answers real questions without unnecessary filler. LLMs tend to ignore pages that sound promotional or vague.

Important quality markers include:

- Clear definitions

- Logical flow

- Accurate explanations without exaggeration

2. Website-Level Trust Signals

Beyond individual pages, AI systems look at site-wide patterns. A site that consistently publishes helpful information builds credibility over time.

This is one reason why building topic authority matters more than chasing single keywords.

3. Language and Tone Signals

Tone matters more than many people expect. Content that sounds natural and thoughtful helps AI interpretation. Content that tries too hard to impress can intimidate human readers—and confuse AI systems as well.

In my opinion, writing as if you’re explaining something to a curious colleague often works best.

How Content Structure Affects LLM Understanding

Structure isn’t just for humans. It’s one of the strongest signals for AI readability.

LLMs rely on structure to identify which parts of a page answer which questions. Here are the two major ways to improve the content structure for LLM understanding:

1. Use of Headings and Subheadings

Clear headings help language models map ideas. When headings match user intent, content is more likely to be included in artificial intelligence results.

This is especially important for how LLMs choose content, because models often extract information from specific sections rather than entire pages.

2. Add Formatting That Helps LLMs

Helpful formatting includes:

- Bullet points for lists

- Short paragraphs with one idea

- Bold text for key concepts

- FAQ sections for direct questions

Structured content reduces response time for AI systems and improves interpretation accuracy.

Let’s understand it more clearly with an infographic:

How to Optimize Content for LLM Content Selection

Optimizing for content selection doesn’t mean abandoning SEO. It means expanding your approach.

1. Write for Clarity, Not Just Keywords

Keywords still matter, but clarity matters more. Use whole words naturally. Avoid repeating phrases in a way that feels forced.

Remember, LLMs decide based on meaning, not keyword density.

2. Focus on Answering Real Questions

Every page should address a clear query. Ask yourself: What question does this page answer better than any other page on my site?

This mindset aligns with how AI engines evaluate relevance.

3. Build Topic Authority Over Time

One strong article helps. A group of connected articles helps more.

When content across your site reinforces the same topic, AI systems gain confidence in your expertise. This is where platforms like Track My Visibility become useful for measuring long-term LLM visibility.

Common Reasons Why LLMs Ignore Content

Sometimes, content doesn’t appear in AI-generated answers for reasons that have nothing to do with rankings or visibility tools. More often, it comes down to how the information is written, structured, and framed for understanding. Since LLMs process meaning differently from humans, small missteps can cause them to skip content altogether.

Reason 1: Promotional Content

Content that reads like an advertisement instead of an explanation is often filtered out. Overly sales-focused language rarely adds clarity or educational value, which makes it less useful in AI mode. LLMs tend to prioritize information that helps users understand a topic, not content that pushes a product or service.

Reason 2: Surface-Level Explanation

Pages that only repeat basic definitions or widely known facts struggle to stand out. If there’s no depth, context, or original explanation, the content doesn’t contribute much to an AI-generated answer. From the model’s perspective, shallow explanations don’t improve the cumulative probability of producing a helpful response.

Reason 3: Poor Content Structure and Format

Long blocks of text, unclear headings, or multiple ideas crammed into one section interpret harder. AI models rely on structure to extract meaning, and when that structure is missing, valuable information can be overlooked. Clear sections and focused paragraphs make content easier to process and reuse.

Reason 4: Less Educational Information

LLMs consistently favor content that explains why something works and how it applies, not just what it is. Educational writing supports better understanding and leads to stronger business outcomes, especially when users rely on AI answers to make decisions.

Future of LLM Content Selection

Looking ahead, how LLMs choose content will continue to evolve.

- LLMs will rely more on trusted sources and consistent patterns

- Educational and explanatory content will matter more than ever

- Brands will need to think about AI visibility, not just rankings

Zero-click answers, AI overviews, and answer engines will keep growing. That doesn’t mean websites lose value, it means value shifts toward clarity and usefulness.

Final Thoughts

Understanding how LLMs choose content helps you write for both AI systems and human readers. The goal isn’t to game the model. It’s to communicate clearly, structure ideas thoughtfully, and answer real questions.

As AI search becomes more common, content that respects context, relevance, and readability will stand out.

Next Step: Audit Your AI Visibility

If your content matters to your business, it needs to be visible where users now get answers. Run an AI visibility check, review how your pages are interpreted, and identify gaps before competitors do.

Use Track My Visibility to monitor where your content appears in AI answers and understand how LLMs evaluate it over time.

In the end, the best strategy is simple: write content that explains, not impresses. When you do that consistently, both users and AI engines are more likely to listen.

Frequently Asked Questions

How does LLM generate its answers?

An LLM generates answers by predicting the next word based on context, not by searching for a single page. It evaluates patterns learned during training and selects words with the highest likelihood of fitting the question. In some cases, systems like retrieval augmented generation allow the model to reference fresh or external information before forming a response.

How does LLM understand the content it processes?

LLMs understand content by analyzing meaning, relationships between words, and context across sentences. Instead of reading like humans, the AI model identifies patterns that help with interpreting nuanced content, such as intent, tone, and topic relevance. This allows it to summarize or explain ideas accurately without memorizing exact text.

How to structure content for LLMs?

Content should be written with clear headings, focused paragraphs, and one idea per section. This makes it easier for language models to extract relevant information without confusion. From a generative engine optimization perspective, clarity matters more than keyword repetition.

How does LLM choose its next action?

LLMs choose their next action by evaluating probabilities for possible next steps, whether that’s generating text, summarizing, or answering follow-up questions. This process relies on a logical decoding strategy that balances accuracy with coherence. Essentially, each response builds on context rather than acting in isolation.