Brands are publishing more content than ever. They’re running checklists, adding schema, tweaking their robots.txt, and still not showing up in AI-generated answers.

The problem is…

Most GEO advice is just old, and it doesn’t match how AI models actually choose content now. And if you’re making the GEO mistakes covered in this article, you could be doing everything “right” by traditional standards and still be invisible to AI engines.

For generative engine optimization, the rules aren’t just different. The scoring system is also different.

Google AI Overviews now appear in 25.11% of all Google searches, up from 13.14% in early 2025. Ranking isn’t enough anymore if the AI answer above your result already answered the question.

Here’s what you’ll learn:

- Why blocking the wrong bots can hand your authority to competitors

- How AI models evaluate content (and why keyword density is irrelevant)

- The structural and technical issues that make your content unreadable to AI crawlers

- Why entity consistency matters more than most brands realize

- How to measure AI visibility the right way

Let’s get into it!

Key Takeaways

- Blocking the wrong AI bots can make you completely invisible to ChatGPT, Perplexity, and Claude search, even if your content is excellent.

- AI models skip marketing fluff and extract facts; your content needs to lead with direct answers, not branded prose.

- Entity inconsistency across platforms causes AI models to either guess wrong about your brand or defer to a competitor.

- GEO is not a replacement for SEO. It’s a layer on top of it, and abandoning either one will cost you visibility.

- Clicks are the wrong metric now; if you’re not tracking AI citations, you don’t actually know how visible your brand is.

How AI Models Actually Evaluate Content

Before we get into the mistakes, let’s be clear on what you’re actually trying to do here.

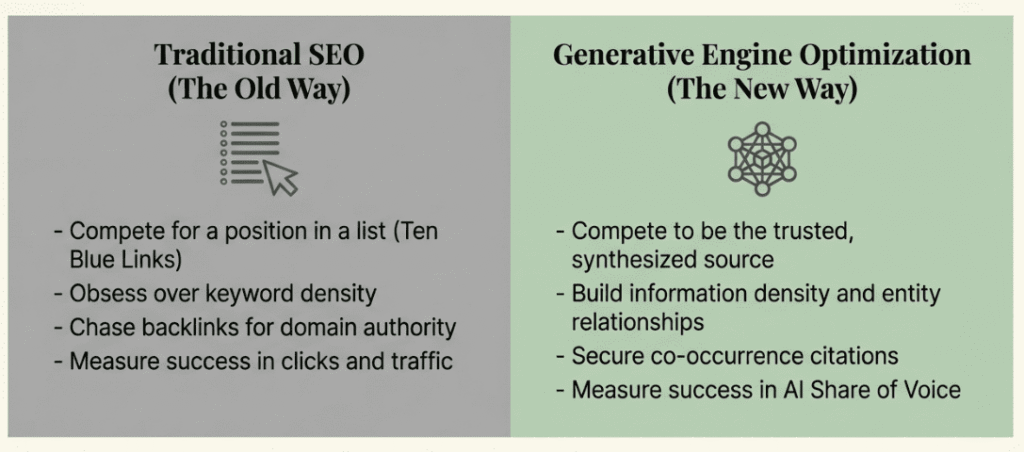

Traditional SEO was basically a ranking competition. You chased backlinks, obsessed over keyword density, and fought for a spot in the ten blue links. Page one meant you existed. Anything beyond that? You might as well not have published.

AI search has flipped that entirely. Generative engines don’t rank things in a list, but they synthesize. They pull from sources they find authoritative, accurate, and clearly structured, then stitch together one coherent answer. If the model picks you, you’re the source. If it doesn’t, you’re gone from that conversation, and it doesn’t matter that you’re ranking #2 organically.

Here’s what these models are actually looking at: information density and entity relationships. Not how many times you used a phrase. They want to know how well you explain how things connect to each other. And while an LLM can process a lot of text, it’s not patient. Every sentence you waste on marketing fluff or recycled SEO-speak makes it harder for the model to extract the facts it needs to feel confident enough to cite you.

Freshness matters more than most brands expect, too. AI summaries lean hard toward current information. A page that hasn’t been touched in a year or two reads as a credibility problem, not just a housekeeping issue. And no, just changing a publish date in your metadata doesn’t fix it. The model needs to see that the actual information is still relevant today.

The mindset shift is simple to say and harder to actually do: stop trying to win a position in a list. Start trying to be the source a model trusts enough to quote. That’s a different goal, and it pushes you toward writing that’s more direct, more factual, and a lot less padded than what most content teams are used to producing.

The Most Common GEO Mistakes Brands Make

Most GEO mistakes don’t announce themselves. They look like normal content decisions, reasonable technical choices, or sensible caution. Here’s where brands are actually losing their AI-driven visibility and what to do about each one.

Mistake #1: Blocking the Wrong AI Crawlers

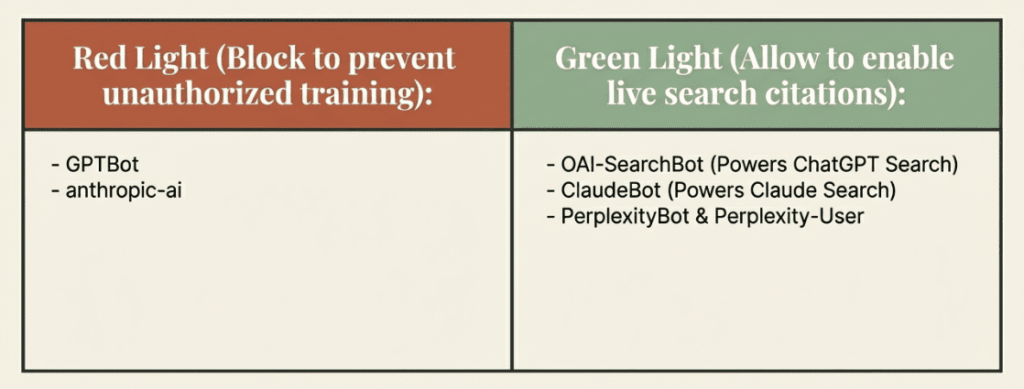

This is one of the most common and most damaging AI visibility issues, and it usually comes from a reasonable place. Brands hear about AI companies training models on scraped content, get worried about their intellectual property, and block everything. The problem is that “everything” includes the bots that power live AI search citations, not just the training crawlers.

The distinction matters enormously:

- GPTBot vs. OAI-SearchBot: GPTBot is for training the next model. If you block it, you’re saying, “Don’t use my data to build GPT-5.” But OAI-SearchBot is for live search. If you block this, ChatGPT’s search feature literally cannot find your website to give you a citation.

- PerplexityBot & Perplexity-User: These handle how Perplexity discovers and fetches your content during a live search. Blocking these is a direct exit from the Perplexity ecosystem.

- ClaudeBot: Anthropic uses this for both training and search. If you want to show up in Claude’s newer search features, you need to be careful about blocking this one.

Here’s how you can fix it: Audit your robots.txt right now. Allow OAI-SearchBot, ClaudeBot, and PerplexityBot. Block GPTBot and anthropic-ai if you want to prevent foundational model training without attribution. These are separate decisions, and treating them as one is a critical GEO mistake.

Mistake #2: Writing for Humans, Not for How AI Reads

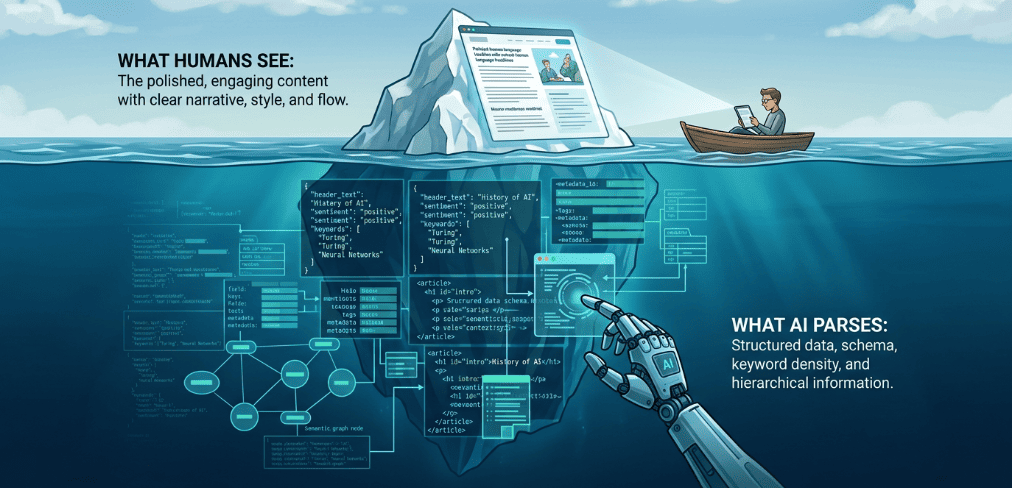

It sounds counterintuitive, but content that feels “branded” and “engaging” to a human often fails the AI test. If your prose is beautiful but lacks density, it’s invisible to an AI model.

AI models process text in chunks. Every time you use a phrase like “industry-leading” or “revolutionary,” you’re using up space without providing any actual data. To a model, these are empty calories. They don’t help the AI answer a user’s question, so the model simply skips over them to find something more citable.

Repeating your target phrase five times doesn’t help anymore. Modern AI understands the relationship between entities. If you’re writing about CRM software, the model already knows what you’re selling. Saying “best CRM” repeatedly provides zero new information gain. Instead, AI models rely on specific facts they haven’t found elsewhere, and that actually earns a citation.

So, to get cited, you need a direct, factual answer in the first 50 words. Use the inverted pyramid method. If an AI can pull a complete answer from your intro, it’s going to credit you. Hide the answer in paragraph four after two paragraphs of “setting the stage,” and a competitor gets cited instead.

Replace adjectives with specifics. For example, The Claim: “Our software offers fast implementation” is vague and unhelpful. But writing something like:

The Fact: “Standard configuration takes 4 hours.” is Specific, citable.

The second version gives the AI tools a data point it can actually use to answer a prompt.

Also, people don’t type “best laptop 2026” into an AI assistant, but they ask, “What’s a lightweight laptop for a student who does video editing?” Brands that structure pages around these natural language, conversational questions see much higher citation rates. It’s not about keyword density. It’s about matching the intent behind the question.

Here’s how you can fix it: Lead with the answer. Clear out the marketing jargon and replace it with hard numbers, specific use cases, and direct answers. The full framework for optimizing content for AI answers covers exactly how to restructure pages so models can extract and cite them reliably.

Mistake #3: Leaving Core Data Buried and Unstructured

If your pricing is written out in paragraph form, your product specs load via client-side JavaScript, and your competitor comparisons are buried in prose. AI crawlers are likely missing most of it.

AI crawlers have a finite processing budget, just like the models themselves. If a crawler has to execute JavaScript to surface your core product data, there’s a real chance it either skips it entirely or reaches it with too little budget left to process it properly. Client-side JavaScript is one of the biggest technical barriers in GEO that brands underestimate.

Schema markup is your friend here. FAQ Page schema on Q&A content. JSON-LD to explicitly label entities and their relationships. Organization schema on your About page. These don’t just help with traditional SEO, but they tell AI models exactly what they’re looking at, reducing the risk of misattribution or being skipped altogether.

Here’s how you can fix this: Use server-side rendering for core product data. Put pricing and comparisons in HTML tables, not paragraphs. Implement schema markup aggressively. Anywhere you have information, an AI should be able to extract cleanly, make sure it’s in raw HTML that a crawler can reach without executing scripts.

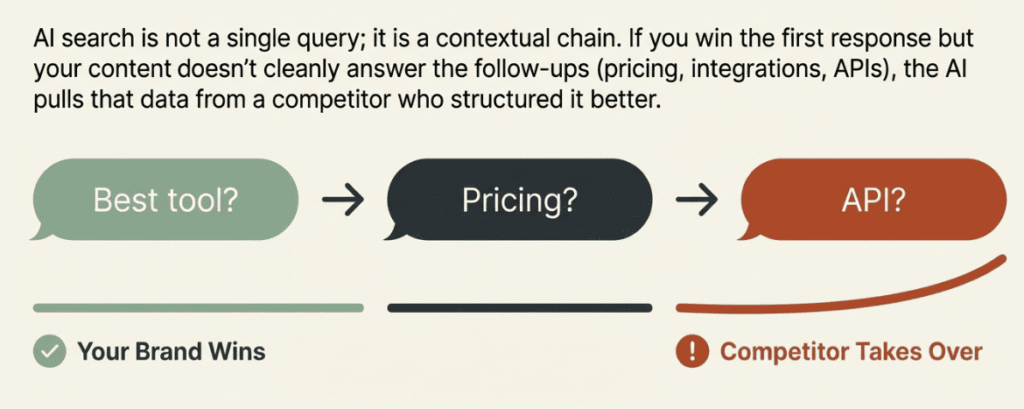

Mistake #4: Optimizing for the First Query and Losing the Conversation

AI optimization errors are something that one doesn’t talk about enough. And it’s probably costing brands more than any other mistake on this list.

AI search is conversational. A user doesn’t ask one question and stop. They ask “What’s the best project management tool for remote teams?” and then follow up with “Which of those has the best API?” and then “Can you compare their pricing?” Each follow-up narrows the conversation toward a buying decision.

If you get mentioned in the first response but your content doesn’t cleanly answer the follow-up questions, the AI pulls that follow-up data from whichever competitor structured it better. You got the introduction and then disappeared from the conversation. Your competitor closed the deal.

This is where B2B and SaaS brands lose in AI-generated answers without understanding why. The first-mention metric looks fine. But downstream in the same conversation, they’ve been replaced.

The concept of a “contextual chain” is useful here: map the three to five questions a user is most likely to ask after first discovering your brand. Does your content answer them clearly, in AI-readable format, without requiring the user to navigate to a separate page, fill in a form, or hunt through your site structure?

Pricing tables, API summaries, integration lists, use-case comparisons. These all need to exist in a format that AI models can extract and cite. If they’re locked in sales copy, protected by paywalls, or scattered across five different pages, you’re not answering the follow-up questions. Someone else is.

Here’s how you can fix it: Build a contextual chain for each core topic your brand owns. Audit the next three to five questions a user would ask after a first-touch AI response. Make sure your content answers them on the same page or through clearly structured internal links, and that the answers are in formats AI crawlers can actually read.

Mistake #5: Treating GEO as a Single-Platform Strategy

ChatGPT with browsing, Perplexity, and Google AI Overviews all retrieve and cite content differently. Optimizing for one doesn’t mean you’re visible on the others.

21% of Google searches now trigger an AI Overview (Safari Digital). Google AI Overviews are still heavily tied to traditional SEO. If your page already has high organic authority, you have a head start. However, Google now prioritizes “Information Gain.” It is looking for that unique data point or expert insight that does not exist on ten other websites.

Perplexity is more aggressive at crawling and prioritizes freshness and structured data. Understanding how Perplexity retrieves content helps you see why the same page can perform very differently across platforms.

ChatGPT (driven by the OAI-SearchBot) is obsessed with structure. It needs to parse your site quickly to find “ground truth” facts. Using clean HTML and clear entity definitions is the baseline. It favors content with a high density of unique, verifiable facts, which is what gives it confidence to cite you as a primary source.

The good news is there’s a strong common foundation across all of these AI search tools. Such as entity consistency, structured data, AI crawlers’ access, and authoritative source mentions. Get those right, and you’re building GEO visibility that works across platforms. Then layer in platform-specific adjustments on top.

Here’s how you can fix this: Don’t optimize for one AI search platform at the expense of the others. Build the foundations that work everywhere first, clean crawlability, schema, entity consistency, and external authority sites. Then tune for specific platforms based on where your audience is actually asking questions.

Mistake #6: Abandoning SEO While Chasing GEO Or Ignoring GEO Because Your SEO Is Strong

Abandoning SEO while chasing GEO is a mistake. So is sitting on strong organic rankings and assuming you don’t need to think about answer engine optimization at all. Both directions are wrong.

AI crawlers depend on the same technical hygiene that search engines have always required. Crawlability, clean HTML, structured data, page speed, mobile rendering, these aren’t just SEO boxes to tick. They’re the baseline AI systems that need to access and process your content reliably.

GEO without SEO means content AI crawlers can’t reach or trust. SEO without GEO means ranking in ten blue links that fewer people are clicking, as behavior shifts toward AI assistants. And according to search engine land, now 37% people start their search with AI models.

A practical way to think about it: if your page doesn’t rank and doesn’t get crawled, it can’t be cited. If it ranks but lacks the structural signals AI models need, it gets crawled but not cited. You need both layers.

Here’s how you can fix it: Treat traditional SEO as the foundation. Core Web Vitals, clean site architecture, crawlability, and schema markup, these serve both simultaneously. Once that foundation is solid, GEO optimization is about layering structure, entity clarity, and information density on top.

Mistake #7: Your Brand Has No Consistent Entity Home

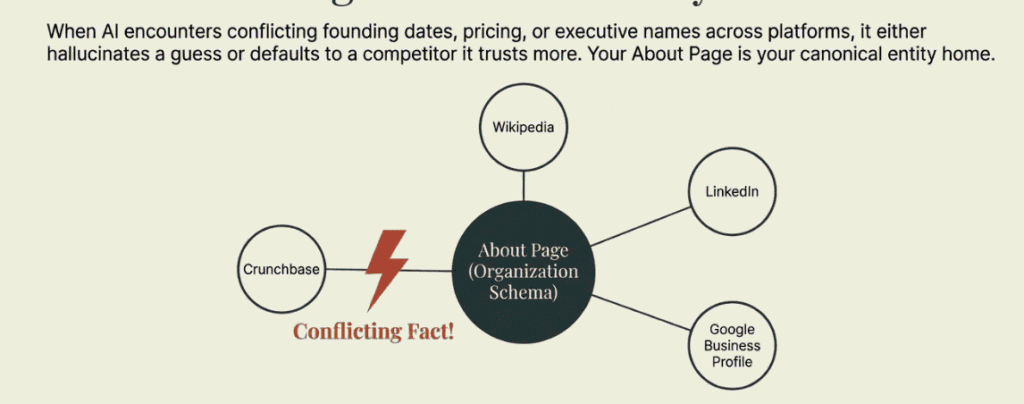

When an AI model encounters conflicting information about a brand, such as different founding dates, different pricing figures, contradictory product descriptions across platforms, then it has two options. It either hallucinates a guess or defaults to a more authoritative source that it trusts more than your own site. Neither outcome is good.

Entity consistency means your founding year, product description, pricing, and key executive names match exactly across your website, LinkedIn, Crunchbase, Wikipedia, and Google Business Profile. Anywhere the facts conflict, AI systems fill the gap with a guess. That guess is often wrong, and once it’s embedded in model responses, it’s hard to correct.

Your About page is your canonical entity home. It should be packed with an organization schema and contain the clear, factual information you want associated with your brand. Clear definitions of what your company does, what category it operates in, and what differentiates it. All the information about your company should be stated plainly, and not in marketing language.

Here’s how you can fix it: Audit every external profile for factual consistency. Your website, LinkedIn, Crunchbase, Wikipedia, Google Business Profile, anywhere your brand appears, the core facts need to match. Build your About page as the canonical source of truth with a full Organization schema. Treat inconsistencies as an active liability, not just a housekeeping issue.

Mistake #8: Building No External Authority Signals

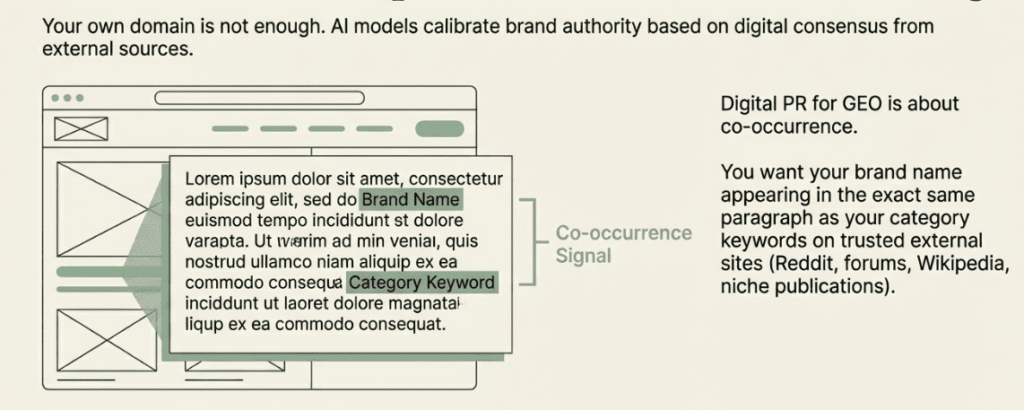

Your own domain is not enough. AI models learn who you are and what category you belong to from the consensus of what authoritative third-party sources say about you. If your brand never appears alongside your target category keywords on external sites, the AI models don’t form a strong association between the two.

External sources like Reddit, niche forums, Wikipedia, news coverage, and industry publications carry disproportionate weight in how AI models calibrate brand authority. A mention in a credible niche blog in your category contributes more to brand authority than ten more pages on your own site.

Digital PR for GEO is different from link-building for traditional SEO. You’re not just after links. You want your brand name appearing in the same paragraph as your category keywords on trusted external authority sites. That co-occurrence signal is what tells AI models that your brand belongs in conversations about that category.

One important thing to note: Quality matters as much as volume. If the dominant external content about your brand is negative, such as you have critical reviews, complaint threads, unfavorable press. AI models absorb that signal too. A strong digital footprint built on negative sentiment isn’t better than a small one. The goal is accurate, positive-to-neutral external representation at scale.

Here’s how you can fix it: Identify the 10-15 external sources your category competitors are being cited from in AI-generated answers. Earn editorial placements there with content that naturally connects your brand to your category. Monitor existing external mentions and address significant negative content where it’s possible to do so.

Mistake #9: Measuring Clicks Instead of Citations

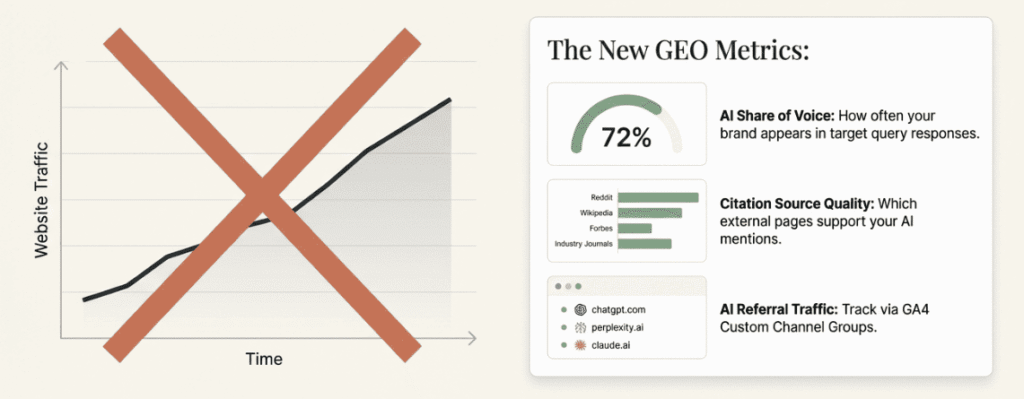

AI search is often zero-click. The model answers the question fully, and the user never visits anyone’s site.

The user mentioned exactly this pattern in a Reddit thread. Even when the content is correct, well-structured, and cited as a source in an AI Overview, traffic to that page still drops because the question is already answered on the SERP. Pages aren’t performing worse. The answer just never leaves Google.

Getting cited in AI Overviews ≠ getting clicks

by u/gromskaok in Sitechecker

Measured by traffic volume, this looks like a loss. In reality, the question is whether you got cited at all, because if you didn’t, a competitor did, and they got the brand exposure while you got nothing.

“The opportunity isn’t disappearing. It’s migrating. Brands that treat AI citations as a primary KPI, not a curiosity, will capture the traffic others lose.”

— Rand Fishkin, CEO and Co-founder, SparkToro

The right measurement framework for GEO visibility looks different from traditional SEO analytics. So, here are a few metrics that actually matter:

- AI share of voice: How often your brand appears in responses to your target queries?

- Citation source quality: Which external pages are supporting your brand mentions in AI responses?

- Referral traffic from AI platforms: tracked separately in GA4 (chatgpt.com, perplexity.ai, claude.ai, etc.)

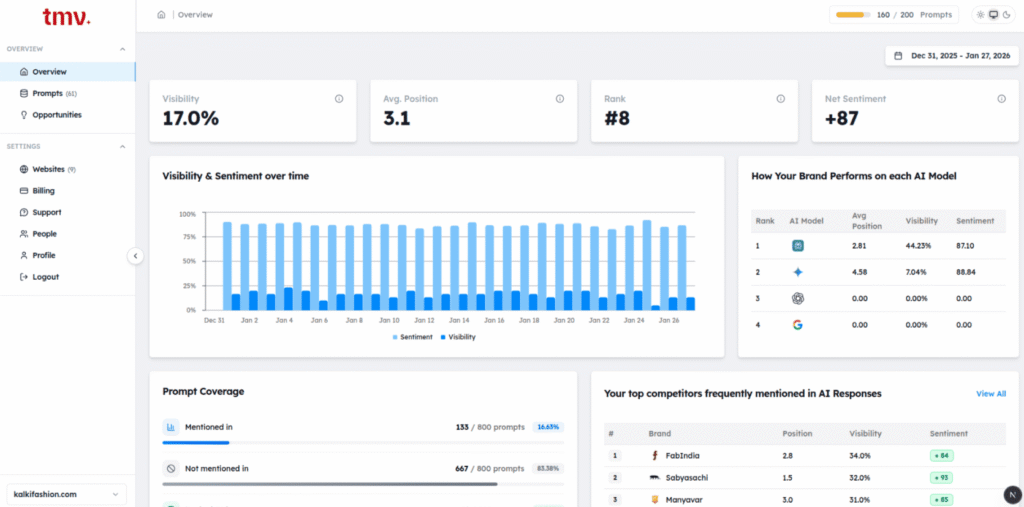

The baseline is manual: take your 10-15 most important questions, query them across the major AI platforms weekly, and track which brands get cited and from which sources. It’s time-consuming, but it gives you a real picture of where you stand. As you scale, tools like Track My Visibility let you monitor AI search citations systematically so you’re not doing this manually across every platform every week.

Here’s how you can fix it: Add AI platform referral tracking in GA4. If you want to save time, you can track your brand visibility using the Track My Visibility tool. Now, don’t wait until your organic traffic is declining to figure out whether you have an AI visibility issue, because by then, you’re already behind.

How to Do a Fast GEO Health Check (15-Minute Audit)

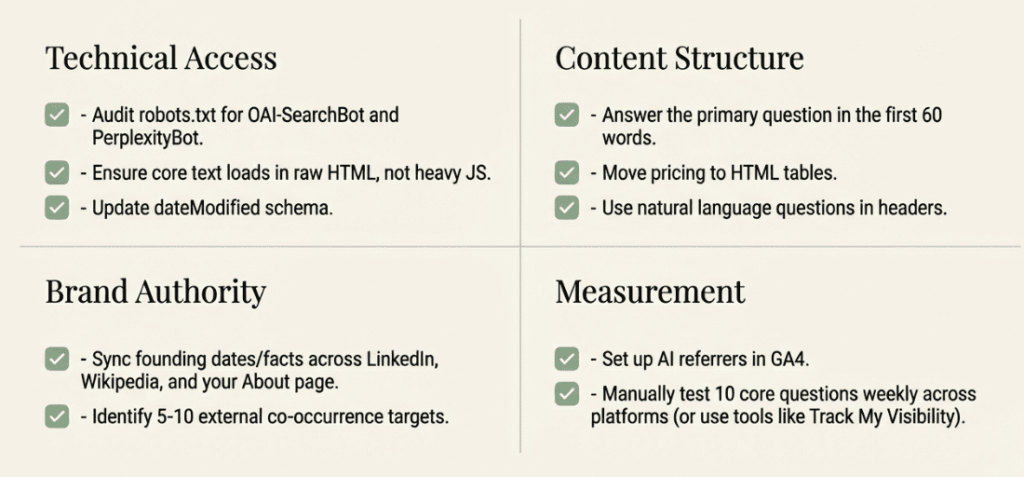

You don’t need a month-long deep dive to find your biggest gaps. Run through these four layers to see if you’re ready for the “Generative” era:

1. Technical Access

First, make sure the bots can actually get in. Your robots.txt should explicitly allow OAI-SearchBot (for ChatGPT Search), PerplexityBot, and ClaudeBot. It’s also worth checking if you have GPTBot or Google-Extended allowed if you want your content used for grounding real-time answers.

Beyond that, verify that your core content is visible in raw HTML. If your site relies on heavy JavaScript to load its text, AI crawlers might miss the context entirely.

Finally, check your dateModified schema as AI models prioritize freshness, so keep those timestamps accurate on your high-value pages.

2. Content Structure

AI assistants love to “extract” rather than “read.” Try to make the first 60 words of every key page answer the primary question directly. If you have pricing or comparison data, put it in standard HTML tables; prose is harder for a machine to parse accurately.

One of the most common GEO mistakes at this stage is focusing on just keyword density and exact match phrases instead of writing content that actually answers a question well. That approach doesn’t reflect how generative AI engines evaluate content. They’re not counting keywords. They’re looking for clear, structured answers.

Avoid keyword stuffing in headings and opening paragraphs. A solid GEO strategy means writing for how generative engines actually read, not for how old-school crawlers used to rank.

Use the FAQPage schema for your Q&A sections, and frame your headings as natural language questions. If you write your headings exactly how a human would ask an AI, you’re much more likely to be the chosen answer.

3. Brand Authority

Consistency is everything for building “Entity” trust. Check that your About page matches your profiles on LinkedIn, Crunchbase, and Wikipedia, as any factual conflict can make an AI hesitate to cite you. You also want to see your brand mentioned alongside your target keywords on at least 5 to 10 high-quality external sites.

AI models look for this “digital consensus” to decide if you’re an authority. Keep an eye on the sentiment of these mentions too; you want them to be neutral or positive to avoid being filtered out of recommendations.

4. Measurement

You can’t manage what you don’t track. Since GA4 often buries AI traffic under generic “Referrals,” set up a Custom Channel Group specifically for platforms like chatgpt.com and perplexity.ai. That way, you’re actually seeing where your AI-driven visibility is coming from.

Beyond the data, run a manual check every week. Test 10 of your most important target questions across different AI search tools and see who gets the citation. If it’s not you, look at which external sources the AI is citing to support that answer. That’s your roadmap for where your brand needs to get mentioned next.

Once you’re ready to move beyond manual tracking, Track My Visibility lets you monitor AI search citations systematically across platforms so you’re not piecing the picture together by hand every week.

Conclusion

The brands winning in AI search aren’t the ones publishing the most content. They’re the ones making it easiest for AI models to understand, trust, and cite them. Most of the common GEO mistakes covered here aren’t complicated to fix. They’re just different from what traditional SEO trained everyone to do, and that’s exactly why so many brands are making them.

Start with the audit checklist above. Fix the highest-impact items first: bot access, entity consistency, structured data, and content structure. When generative engines evaluate your pages, these are the signals that move the needle fastest. Then build outward into external authority signals and better measurement. You don’t need to fix everything at once. You need to fix the right things first.

Optimizing content for GEO performance isn’t a one-time project either. Brands that treat it as ongoing work, not a checklist they run once, are the ones that see real GEO success over time.GEO and SEO aren’t competing priorities. They’re the same foundation, extended for a different kind of search. The brands that treat them that way, and stop making these common AI optimization errors, are the ones that will stay visible as search behavior shifts from traditional to AI-first.

Frequently Asked Questions

What is GEO and how does it differ from SEO?

GEO is about making your content easy for AI tools to trust and cite, not just rank. While SEO focuses on appearing in search results, GEO focuses on becoming the source that AI pulls answers from. The tactics overlap, but the end goal is different. ite your content more reliably, regardless of the platform.

What AI crawlers should I allow in robots.txt?

Allow OAI-SearchBot, Claude-User, and PerplexityBot. These power live AI search citations. You can block GPTBot and anthropic-ai if you want to prevent your content from being used in foundational model training without attribution. The key distinction is between training crawlers vs. citation crawlers. They're not the same bots.

How do I know if my brand is appearing in AI search results?

Start manually. Query your 10-15 most important questions across ChatGPT, Perplexity, and Google AI Overviews, and record which brands get cited. Track this weekly. For GEO at scale, use a dedicated monitoring tool like Track My Visibility to automate citation tracking across platforms.

Does keyword stuffing hurt GEO performance?

It doesn't help, and it likely hurts. AI models rely on semantic entity relationships, not keyword density. Repeating a phrase provides zero information gain. The model already understands the topic context. Filler text and repeated keyword stuffing consume processing budget without contributing anything citable.

How long does it take to see results from GEO optimization?

Some improvements, like fixing crawlability or adding schema, can show results in a few weeks. But building authority and consistency across the web takes longer. Expect a few months for a stronger, lasting impact.

Can I do GEO without a paid tool?

Yes, you can start with manual checks, technical fixes, and consistent content structure without spending anything. The challenge comes when scaling, as tracking many queries across platforms manually can become difficult over time.

Does my site need to be indexed by Google to appear in AI search results?

For Google AI Overviews, yes, indexing is important. Other platforms don’t strictly require it, but the same technical quality that helps Google index your site also helps AI tools access it. If your site isn’t easily crawlable, visibility will suffer everywhere.